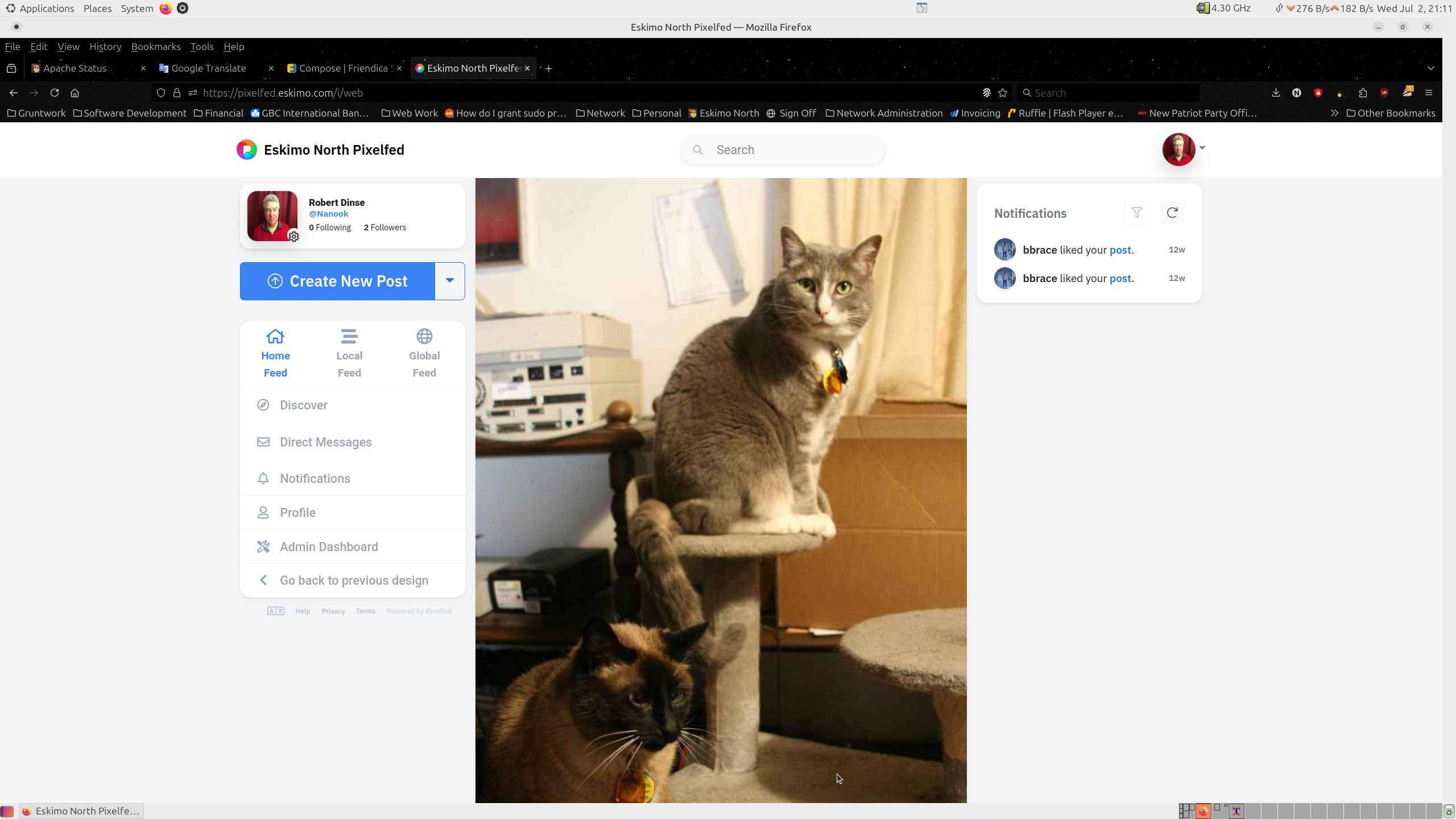

I finally got pixelfed.eskimo.com to properly federate so that it now shares images with the rest of the fediverse allowing you to see pictures from people you follow on other sites and they your pictures.

Eskimo North Changes

I’ve firewall rules to address recent SYN and UDP floods. So far they have had the intended effect.

I’ve installed a new shell server, anduinos.eskimo.com. This is a debian derived server similar to popOS except the default graphical environment is Gnome configured to look like Windows. Unfortunately this environment does not seem to work if using vnc or x2go, instead you just get default mate or gnome environments.

I’ve installed a new application called ‘qman‘ on all the shell servers. It is an alternative to man for reading manual pages. Unlike man, it provides an index of sections and then pages within those sections and also provides colored highlighting that makes reading manual pages a bit more pleasant.

Mail System Maintenance

The mail system is currently shutdown to image the servers. That is to say to take a copy of the virtual machine image while it is down in case it should get corrupted or lost and needs to be restored in the future. I expect to have the mail sub-system returned to service around 3AM.

Web Server Database

Mariadb, the database that is used to provide SQL service for most web apps requiring it (a handful use postgresql) hung when some internal process failed to remove a lock and everything else piled up behind it. I could not restart it with either systemd or mysqladmin, and even a regular kill signal did not work, kill -9 eventually did but even the response to that was delayed by several minutes. There was nothing in either the kernel logs or the mariadb logs that would provide a clue as to why this happened and it is a failure mode I have not seen before.

Because mariadb was not shut-down properly, database corruption is a possibility. Accordingly, I am running mariadb-repair –all-databases to check for and repair any inconsistencies. This may slow response slightly for the next hour or so.

Mail system is down. Something very weird happened when I rebooted the server it is hosted on. It did not find the qemu img for the mail spool at the location specified in the XML file and the location was obviously wrong as the directory did not exist. Yet, I had rebooted the server many times before. I found a spool img but it is two weeks old. The current one seems to have just vanished from the disk. I have a backup that is from last Friday but that is as current as it gets. So before I turn mail up I am doing a thorough search for the spool image before I give up and restore from a six day old backup. This is a behavior I’ve never seen before. I am doing my best to recover. My automatic rsync also failed to run since the last backup so I can’t restore from that either.

Mail is down presently as it is being moved from the Iglulik server to inuvik. The reason I am doing this is the machine is showing some signs of instability so I want to get mail, a critical service OFF of this machine before it crashes and burns.

This was THE only machine that would not boot 6.14.2, could not find the root partition even though 6.14.0 finds it fine. I do not know what is going on with this as none of the other machines had this issue with 6.14.2.

In addition the USB power is intermittent, not a good sign and it keeps thinking it has lost it’s BIOS configuration though if I just save it again it is still the same so it is actually reading it ok but doesn’t think that it is. And to add to all of this it has locked up twice recently. So basically it’s showing signs of being near death and so I’m trying to move everything off before it dies. Then I will consider what I want to do with this box. There is plenty of capacity on ice and inuvik to handle the services this machine is presently handling.

Squirrelmail Moved

Squirrelmail has been moved to our new web server. The menu items through our home page and web-apps will work as normal, but the direct URL has changed to https://squirrelmail.eskimo.com/.

Mail Up

Mail is back up and is now on a stable but slower platform. I will need to reload Linux on the other machine as it has somehow become badly corrupted. Systemd is not acting properly on the larger machine and is starting servi ces, particularly systemd-networkd which needs NOT to be running as I use network-manager, even though they are masked that breaks the network. It’s not impossible that it has become infected with some sort of malware, though the last time I had machines with a confirmed virus was three decades ago. But then there are those among us who consider systemd to be malware… I have looked and can’t find anything but it is possible to disguise things as system processes, etc. Given though that this happened right after a drive went south, it is more likely just corruption of something somewhere, maybe multiple somethings but then again our web server was recently the target of a denial of service attack so who knows. Cleaning it out and starting fresh is the safest bet.

Mail Maintenance

Mail is going to be down for approximately 2 hours to move from the present host that has some software networking instability issues and needs a reload to a stable host. Because this involves copying 400GB of data across a 1Gb/s link, it will take approximately two hours, I expect it to be back up around 23:00 March 15th.

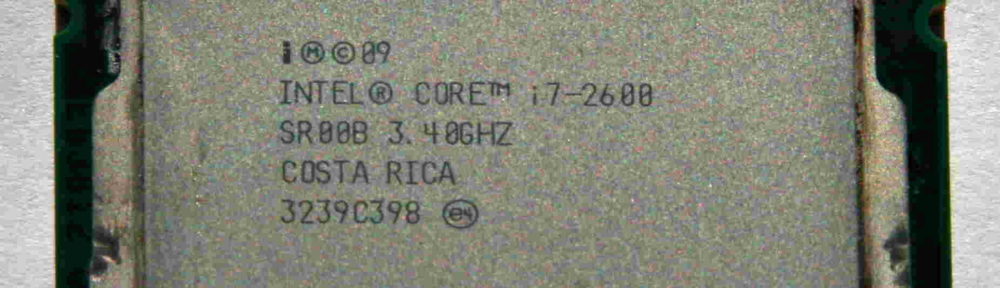

Inuvik – Still Trying to Kill me

Inuvik, the server that runs all the social media and also runs manjaro and alma shell servers is still out of service. I can’t get an Asus X299 board that doesn’t have dead memory channels, they are all just shit, and I got a Gigabyte board that did work but one of the SATA controllers went south so four ports don’t work and the remaining four take errors. Also the video card slot is intermittent. I suspect the bad SATA controller chip is putting noise on the bus screwing everything else up. I’ve ordered another one of these boards, be another week or so for it to get here. Not sure why the SATA port crapped out, that is just weird.